Applications

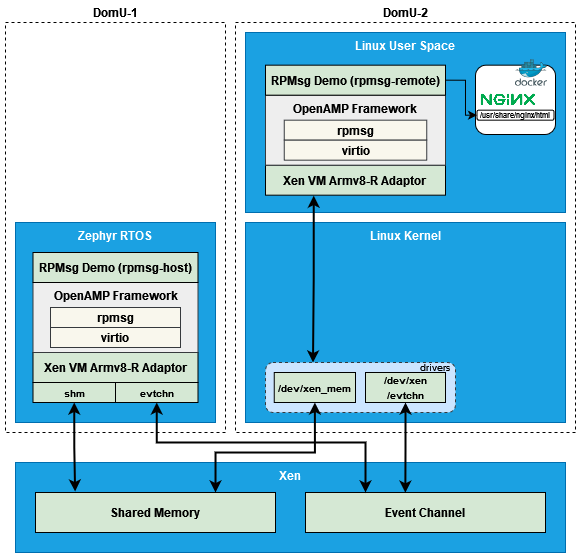

The following diagram shows the architecture of the applications implemented in this Software Stack:

Inter-VM Communication

As described in the above architecture diagram, Inter-VM communication implemented in this Software Stack is based on a shared memory mechanism to transfer data between VMs. It is implemented using the OpenAMP framework in the application layer. The Xen hypervisor provides a communication path using static shared memory and static event channel for data transfer. And the guest OSes (Linux and Zephyr) in the middle expose the static shared memory and the static event channel to upper layer applications.

For the implementation of the static shared memory and the static event channel in Xen and 2 guest OSes, please refer to the section Hypervisor (Xen), Linux Kernel and Zephyr in Components.

RPMsg (Remote Processor Messaging) is a messaging protocol enabling communication between two VMs, which can be used by Linux as well as real-time OSes. RPMsg implementation in the OpenAMP library is based on virtio.

This Software Stack introduces the meta-openamp layer to provide support for the OpenAMP library.

Docker Container

The support for docker container in this Software Stack is provided by docker-ce recipe in the meta-virtualization layer.

Running docker also requires kernel-module-xt-nat, which is enabled by

meta-armv8r64-extras/dynamic-layers/virtualization-layer/recipes-containers/docker/docker-ce_git.bbappend.

Demo Applications

There are two demo applications in this Software Stack to demonstrate these two use scenarios described in the High Level Architecture section in Introduction.

RPMsg Demo (components/apps/rpmsg-demo)

Docker Container Hosted Nginx (meta-armv8r64-extras/dynamic-layers/virtualization-layer/recipes-demo/nginx-docker-demo)

These two applications can work together to complete the following process:

The program running in Zephyr periodically collects the data of the system running status

Zephyr sends sampled data to Linux via RPMsg

After receiving the data, Linux stores the data in a local file

The Nginx web server running in a docker container serves this file for external users visiting through the HTTP protocol

Repeat the above steps so that users can get continuously updated system running status

RPMsg Demo

The RPMsg Demo application consists of two parts:

rpmsg-hostruns in the Zephyr domain to sample the data of the system running status and sends the data torpmsg-remote

rpmsg-remoteruns in the Linux domain to receive the data and stores it in a local file/usr/share/nginx/html/zephyr-status.html

These two parts communicate with each other using the OpenAMP framework in the application layer, and the static shared memory and static event channel provided by Xen in the lower layer.

The recipes for this demo application are provided by meta-armv8r64-extras/dynamic-layers/virtualization-layer/recipes-kernel/zephyr-kernel/zephyr-rpmsg-demo.bb and meta-armv8r64-extras/dynamic-layers/virtualization-layer/recipes-demo/rpmsg-demo/rpmsg-demo.bb. The source code can be found in directory components/apps/rpmsg-demo.

Docker Container Hosted Nginx

In the Docker Container Hosted Nginx demo application, the Nginx server

serves the file /usr/share/nginx/html/zephyr-status.html, and external

users can visit the data in a web browser.

This demo application start automatically by default. To start it manually, run

script /usr/share/nginx/utils/run-nginx-docker.sh in the Linux domain.

See the section Virtualization in Reproduce of User Guide

for example usage.

The recipe for this demo application is provided by meta-armv8r64-extras/dynamic-layers/virtualization-layer/recipes-demo/nginx-docker-demo/nginx-docker-demo.bb.

Run Demo Applications

In the default configuration, the above two demo applications run automatically after the system starts. See the section Virtualization in Reproduce of User Guide for example usage.

To prevent them from running automatically in the Linux domain, set

XEN_DOM0LESS_DOM_LINUX_DEMO_AUTORUN to 0 when build, For example:

# Build

XEN_DOM0LESS_DOM_LINUX_DEMO_AUTORUN=0 \

kas build v8r64/meta-armv8r64-extras/kas/virtualization.yml

And run the demo applications manually using the following commands in the Linux domain:

# Start Nginx web server

/usr/share/nginx/utils/run-nginx-docker.sh

# Start rpmsg-demo

rpmsg-remote

Limitations and Improvements

The shared memory and event channel mechanisms that Inter-VM communication

relies on are still evolving in Xen, Linux, and Zephyr, which leads to the

limitation of the RPMsg Demo application.

The RPMsg Demo application is mainly to demonstrate the communication

between VM guests within the Xen hypervisor. This program does not have a

sophisticated fault tolerance and exception recovery mechanism. If an exception

occurs, in the extreme case it may be necessary to restart FVP for the next

demonstration, especially in the case of running it manually.

In the current implementation, at least the following improvements can be made at the application level:

In

rpmsg-host, whensend_messagefails, the current operation is to exit the loop directly. The improvement here can be to add a retry mechanism. If it continues to fail, it can further fall back to trying to restartrpmsg_init_vdevto setup a new RPMsg channel. The detailed code is in components/apps/rpmsg-demo/demos/zephyr/rpmsg-host/src/main.c.Implement

rpmsg-hostbased on Zephyr IPC subsystem RPMsg Service, which can support multiple endpoints so that it can support multiple RPMsg channels. The reference code can be found here.